Leftwich

Where is the Killer App for “The Connected Home”?

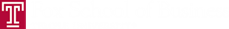

The “Connected Home”, a concept we talked about in class as part of the disruptive innovation section, currently has a low household penetration rate. According to this article on USAToday, NextMarket Insights reports that only 1.48 million American households had smart home systems by the beginning of 2014; this number, however, is projected to skyrocket to 15.03 households by 2019.As innovative as the idea is, not many Americans are aware of the concept and the technologies that currently back it. Apple, Samsung, Nest, and Google are among a slew of companies releasing dedicated technologies aiming to connect the home through “The Internet of Things” (and debuted many of these at this year’s CES conference, such as Apple’s HomeKit and Samsung’s SmartThings platform), but herein lies the problem: there is no standard method of connecting household appliances. Each company offers their own proprietary solutions, which doesn’t help when mixing and matching appliances and technologies; in other words, the different components of a connected home won’t necessarily be compatible with each other.

In order for these technologies to truly disrupt the market and gain a widespread adoption rate, many feel the need for a “Killer App“ to sway the majority of consumers into investing into the technology: one that is easy to use and provides a high level of standardization.

- What components do you guys think will be required in a “killer app” for a Connected Home, and who do you think is capable of pulling this off?

- Is there any established technology out there, such as Apple’s HomeKit (which allows hands-free Siri communication), that you think will eventually dominate the market?

- What is still missing in this sector that consumers have a need for that isn’t being provided?

- Lastly, do you agree with the household penetration projection for 2019?

Unsustainable Business Models: They Skip the “Search” Phase

In this blog post on Strategyzer, Nabila Amarsy outlines the 3 essential requirements to make a business venture successful:

- The Right Value Proposition

- The Right Business Model

- Flawless Execution

This might seem like common sense, but Amarsy goes on to define what she calls the “search” phase of developing a business idea and how its absence lies at the root of many unsuccessful business ventures. The “search” phase takes place in conjunction with the first 2 requirements outlined above, and basically means “producing market evidence that an idea is going to work”. This phase can be viewed as a loop (refer to the picture above) that occurs until enough research, prototyping, and testing has been done to conclude that the idea will work. Skipping the “search” phase is referred to as premature scaling, and Amarsy claims it can kill any business. Flawless execution can then only be successfully achieved after appropriately exiting the “search” phase.

What do you guys think about this? Should Christenson (and others) have included this in his article “Reinventing the Business Model”? Do you think many people just assume their idea is great because they themselves (and maybe a select inner grouping of people) think so? And what of the companies that skip this phase but are still successful (such as large corporations that invest heavily in R&D, which Amarsy points out is not synonymous with “searching”)? Are they just lucky? And how much time should be invested into the “search” phase? Finally, this idea is very appropriate for our capstone project. How many teams think they successfully conducted a “search” phase that enabled them to conclude their idea was worth pursuing?

————————————————————————————————————————————————————

“We’re a classic MBA case study in how not to introduce a product. First we created a marvelous technological achievement. Then we asked how to make money on it.”

–Iridium Interim CEO John A. Richardson, August 1999

Performance Reviews: Self-Evaluation?

In this Forbes article, the author lists the 10 biggest mistakes bosses make in performance reviews. Instead of re-listing all of them here, I’ll allow everyone to read the article for themselves. Upon review of the article, and judging by other posts on this blog about performance reviews, I think we can all agree that there is a lot of frustration out there in the corporate world and flaws being made in regards to performance reviews. There are a lot of opinions on what constitutes a good or bad review, or a good assessment system, but all of this controversy got me thinking: why don’t employees just evaluate themselves?

Instead of having a boss review your performance over the last quarter/year/whenever and trusting they are aware of all you have contributed, what do you guys think about employees assessing themselves and pointing out how they have benefited the company in the context of their specific role and what is expected of them, but also pointing out areas for improvement? Obviously an employee is not going to want to give themselves a bad review, so maybe there could be a hybrid system where an employee strictly points out a list of contributions that a supervisor/boss may have forgotten about or overlooked, and leave the negatives to the supervisor.

This is just a thought, but one I have not heard of or come across in our discussion of this topic in our class. Do you guys think this could be a viable alternative? Have any of you heard of this before in the real world? If so, has it worked? Is it successful? Would this work in our class, or would that be a bad idea?

Laziness and First Impressions: Barriers to Integrative Thinking

In this article, author Michael Michalko argues that cognitive laziness is one of the greatest barriers to integrative thinking. He points out that first impressions of problems, just like first impressions of people, are narrow and superficial. If this mentality is never changed, it prevents alternative ways of looking at the problem, meaning that integrative thinking will never surface. To remove any biases or assumptions resulting from a first impression, Michalko recommended taking Leonardo DaVinci’s advice: always look at a problem in at least three different ways in order to get a better understanding; or Sigmund Freud: “reframe” a problem in order to transform it and look at it from a different perspective.

This suggests patience. Unfortunately, laziness is inherently the result of impatience.

Thus, cognitive laziness becomes a barrier to entry, the entry point being integrative thinking. In order to gain a deeper understanding of a problem at hand, particularly in a business context, how does one motivate oneself or others in order to get rid of cognitive laziness? Getting rid of biases/assumptions is easier said than done. How would you go about this to achieve integrative thinking? Any thoughts?

3D Printing: Consumer Revolution?

In this Forbes article, columnist Freddie Dawson discusses the topic of 3D printing and raises questions about how disruptive the technology really is. As Clayton Christenson has stated many times, a core tenet of an innovation being disruptive relies on its price point and accessibility; 3D printing has existed for a while, but it is starting to make headlines nowadays because of its continually decreasing price point (thus, expanding accessibility). The technology is still not cheap and can only make smaller objects using very specific substances (which is why there is controversy surrounding its level of disruption in the near-future), but one cannot deny that there is huge potential for disruption in the long-term future. Optimists in the business world are referring to this inevitable future as the “Consumer Revolution”, a time period in which the 3d printer will become a standard household object enabling the creativity in entrepreneurial individuals to produce almost any object they can think of, of any size and substance.

Questions to consider:

- If owning an affordable and versatile 3d printer is an inevitable reality, how disruptive do you think this will be to the retail, supply chain, and manufacturing sectors?

- Will 3D printing never become that disruptive, only becoming an alternate means of production?

- If users are able to “download” and “print” physical objects that normally would need to be shipped, do you think this could disrupt the online retailing industry and big-name giants such as Amazon?

- Some, including myself, would interpret this as owning the means to production, something that has always been privatized by large corporations in capitalism. With a more communistic foundation behind the technology, will this have adverse affects on capitalism in general?

- Furthermore, with the United States’ reliance on Eastern countries such as China for cheap labor and production, how do you think 3d printing could affect east-west relations and the global economy?

AI + IoT = Good or Bad?

According to this article, the integration of artificial intelligence (AI) with the Internet of Things (IoT) is inevitable, and there are prominent people such as Stephen Hawking, Bill Gates, and Elon Musk that warn against what may result by the combination. Fears range from the simple (putting employees out of work) to the extreme (Terminator SkyNet scenarios), but there are also people like Kevin Hally (founding editor of Wired magazine) who see the integration of IoT and AI to bring huge benefits to business (i.e. cutting costs by automating tasks) as long as they remain “consciousness-free”.

With over 50 billion devices expected to be connected to the internet by 2020 (more than 7 times the entire human population as of now), what do you think the implications will be if billions of embedded devices are eventually connected to artificially intelligent machines? Do you think AI is still too far in the distant future to even worry about? And, arguably as important, how can AI become a disruptive innovation unto itself? Do you agree with Kevin Hally that soon start up businesses will start basing their business plans on “cognitizing” what was previously “electrified”, with AI eventually becoming a commodity like electricity currently is?

PlayStation Mobile Shutting Down..Reflections on the “Internet of Things”

As confirmed in this article, Sony has announced that it is shutting down its PlayStation Mobile service; this happened just ONE DAY after I recommended in my presentation last Tuesday that Sony use the service as part of its strategy to protect itself against new entrants such as OnLive that are attempting to disrupt the market. Launched in October 2012, the service will officially end on July 15, culminating in a mere existence of 2 years and 9 months (for those who weren’t in class for my presentation, PlayStation Mobile was basically a framework for an “app store” that hosted exclusive games and other content that catered to indie developers). A listing here shows that the service was compatible with 72 devices, including the PlayStation Vita and PlayStation TV, taking full advantage of the “Internet of Things”, or IoT. Yet, with such a broad range of compatibly and a strong brand name, the service was ultimately known for poor developer support, a weak game library, and a small user base.

In light of this failure by an established incumbent but the continued success of other services that take advantage of IoT devices (such as iOS, Android, and Valve’s Steam) and a wave of new entrants (such as OnLive, as from my presentation, and Nvidia’s Shield, as posted by James Brunetto), what do you think a company needs to do in order to achieve success and stay relevant with a service that is meant to be compatible on a wide range of popular devices? Was PlayStation Mobile not innovative enough? And what features would a service like this in the gaming industry need in order to truly be considered disruptive?